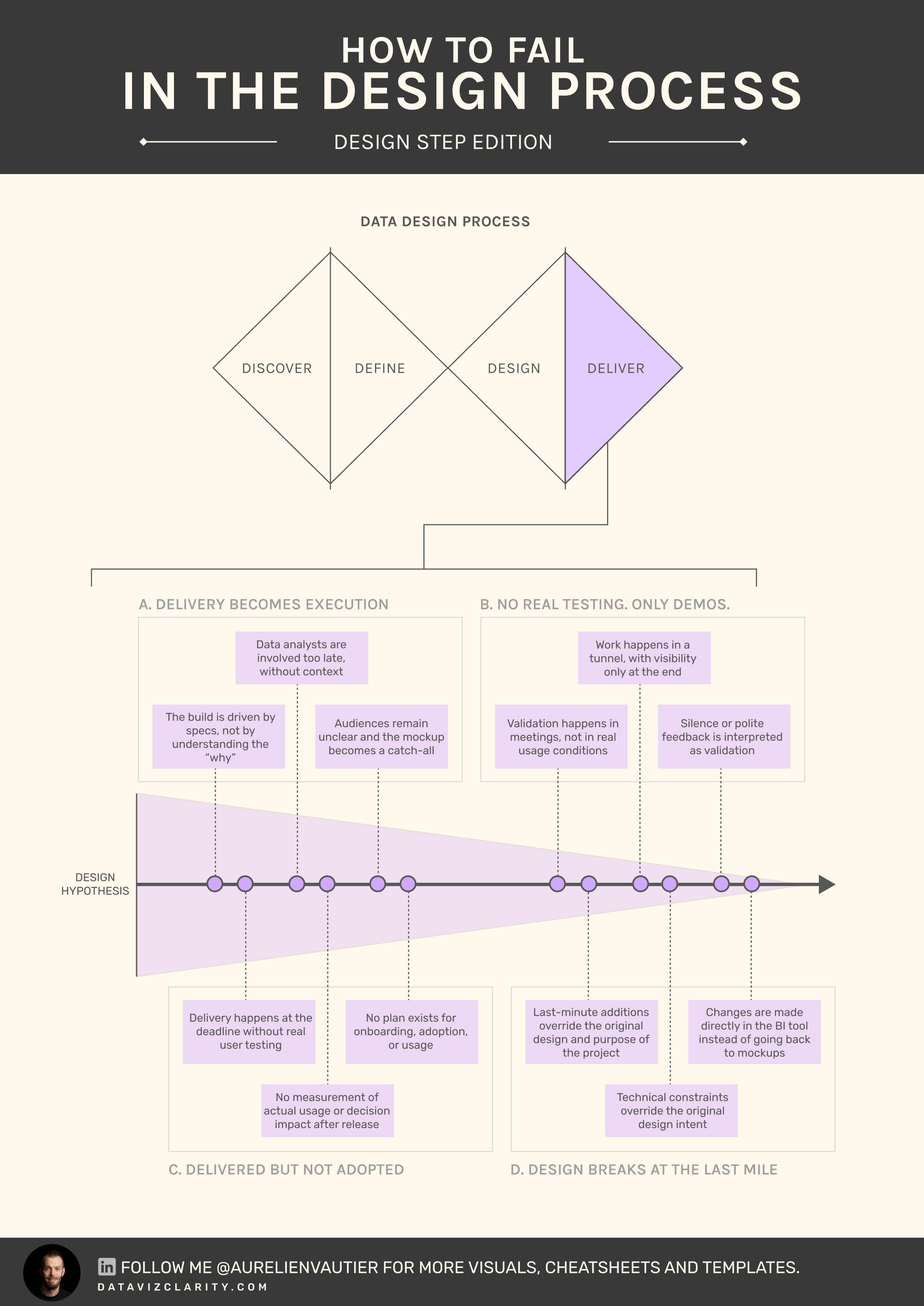

What could go wrong during 'Deliver'? - Part 4

The final delivery is often treated as the finish line.

In reality, it’s where most dashboards quietly die.

Dashboards are delivered on time.

Reviewed. Approved. Even praised.

Then adoption stalls.

Usage drops at week 2.

Decisions don’t change.

The real problem is not the quality of the dashboard.

It’s the illusion of validation.

Delivery is treated as a presentation moment, not a confrontation with reality.

- No real testing.

- No real friction.

- No real ownership.

Time is wasted shipping products that will never be used

The Delivery Integrity Model

A simple way to assess how robust your delivery really is:

-

Presentation

The dashboard is shown. Feedback is polite. No real usage. -

Approval

Stakeholders validate in meetings. No real-world testing. -

Exposure

Users access the dashboard, but without guidance or context. -

Usage

The dashboard is used in real workflows, with friction and feedback. -

Adoption

The dashboard is embedded in decision-making rituals.

Most teams stop at level 2.

They think they’ve delivered. They’ve only presented.

Another quick corporate example

A data team delivers a new sales dashboard.

Final demo goes well.

Everyone agrees it "looks good".

Two weeks later, regional managers export data to Excel again.

The dashboard exists, but decisions haven’t moved and stays outside the BI tool.

Nothing failed technically.

Delivery just never reached reality.

A dashboard validated in a meeting is just a well-designed assumption.

Thumbs-up in Google Meet are not validation. It’s absence of friction

If dashboards are being delivered but not used, the issue is rarely visual. It’s structural.

I run diagnostics to assess where delivery breaks between design, validation, and adoption.

Have a great week!

Responses